AND TOUCH

COMBINED FOR

ENHANCED ROBOTICS CAPABILITIES

BUILDING INTELLIGENT, POWER EFFICIENT ROBOTS BY COMBINING THE HIGH PERFORMANCE OF EVENT-BASED VISION WITH TOUCH

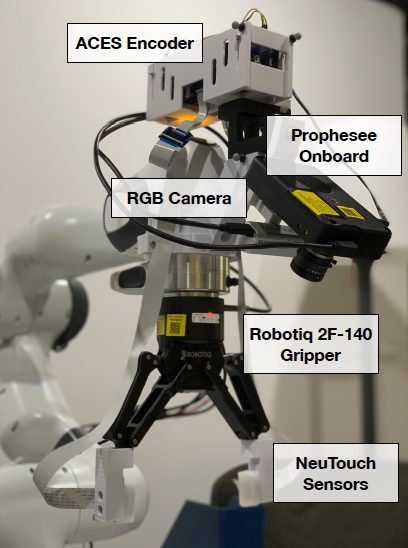

Researchers at the Collaborative, Learning, and Adaptive Robots (CLeAR) Lab and TEE Research Group at National University of Singapore are taking advantage of the benefits of Event-Based Vision, in combination with touch, to build new visual-tactile datasets for the development of better learning systems in robotics. The neuromorphic sensor fusion of touch and vision is being used to help robots grip and identify objects.

Their research work on ‘electronic skin’ includes Prophesee’s Metavision Event-Based Vision sensor, and demonstrates how touch and vision can be combined for enhanced capabilities in robotics.

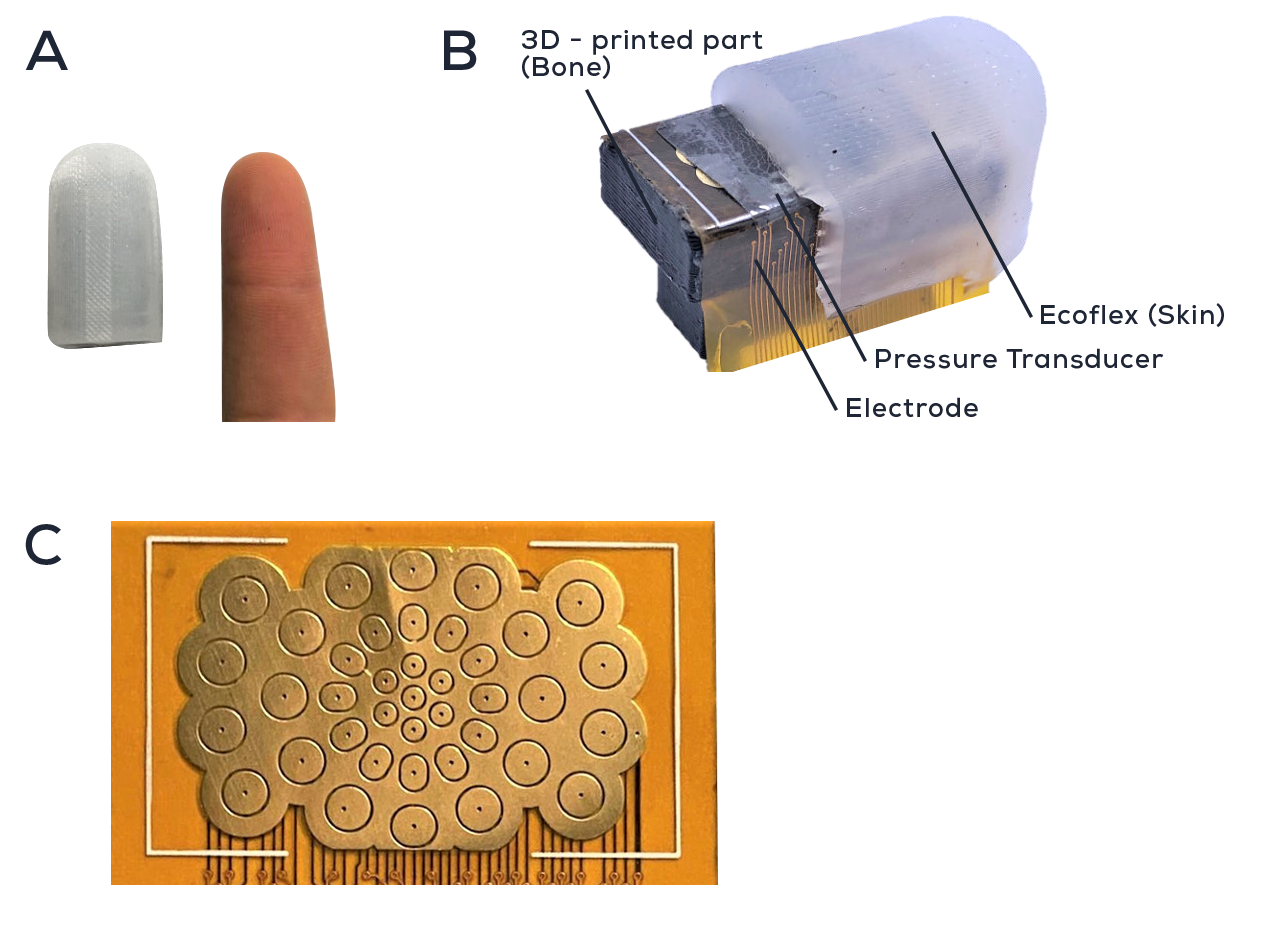

According to report by EETimes, the new tactile sensor they used, NeuTouch, consists of an array of 39 taxels (tactile pixels) and the movement is transduced using a graphene-based piezo-resistive layer; you can think of this as the front of the robot’s fingertip.

It’s covered with a protective Ecoflex layer that helps to amplify the stimuli, and is supported by 3-D printed “bone”. The fingertips can then be added to grippers.

Similar to how the independent pixels in Prophesee’s Metavision sensor collect data from changes in the environment, the NeuTouch fingertips process tactile spikes. The use of neuromorphic technology results in high performance and low power consumption, allowing automation systems to touch and see.

(A) NeuTouch compared to a human finger.

(B) Cross-sectional view of NeuTouch and constituent components.

(C) Spatial distribution of the 39 taxels on NeuTouch.

This training is further being applied to what they call a slip test. The goal of the slip test is to have the robot determine the amount of pressure that is not quite enough to grip the object securely. The goal of the test would be to improve machine reaction time and prevent dropping and the fallout of such accidents.

This research demonstrates how the sensors are collecting relevant information, as well as combining the information at the same time, mimicking the human brain process. Benjamin Tee, Harold Soh and their colleagues have made the datasets available to other researchers to aid the development of intelligent power-efficient robot systems.

ABOUT NATIONAL UNIVERSITY OF SINGAPORE

The National University of Singapore (NUS) has nurtured generations of talent since their founding in 1905. Their 17 faculties across three campuses in Singapore and 12 NUS Overseas Colleges around the world provide a thriving environment for a diverse community of about 55,000 students and staff to work, live and learn together. They pioneer innovative academic programmes, influential research and visionary enterprises to improve lives and contribute to society.

The Collaborative, Learning, and Adaptive Robots (CLeAR) Lab at NUS investigates the science and engineering of human-AI/robot teams. They conduct human-robot-interaction (HRI) experiments and develop novel machine-learning (ML) methods that enable robots to work with people.

The TEE Research Group at NUS is developing new materials, devices and systems for addressing the challenges in human-machine interactions, robotics and biotechnology applications for the AI future.

ABOUT PROPHESEE INVENTORS COMMUNITY

Since 2014, a network of researchers, start-ups and companies have shown incredible imagination and innovation using Prophesee’s neuromorphic vision technologies.

This has created an Event-Based Vision ecosystem of inventors sharing their work and ideas. Their creativity with Prophesee’s technologies inspires us.

We are gathering them here to inspire future inventors in turn, in the hope that, like the projects here, they create something new together and reveal the invisible.