Event cameras represent a shift toward next generation performance and efficiency for computer vision

Neuromorphic engineering is a type of electronic design and implementation that mimics biology. The fundamental premise is that biology has evolved over time to make us an extremely efficient and adaptable species, so taking cues from those traits and applying them to machines holds vast potential to improve their performance, power consumption and flexibility.

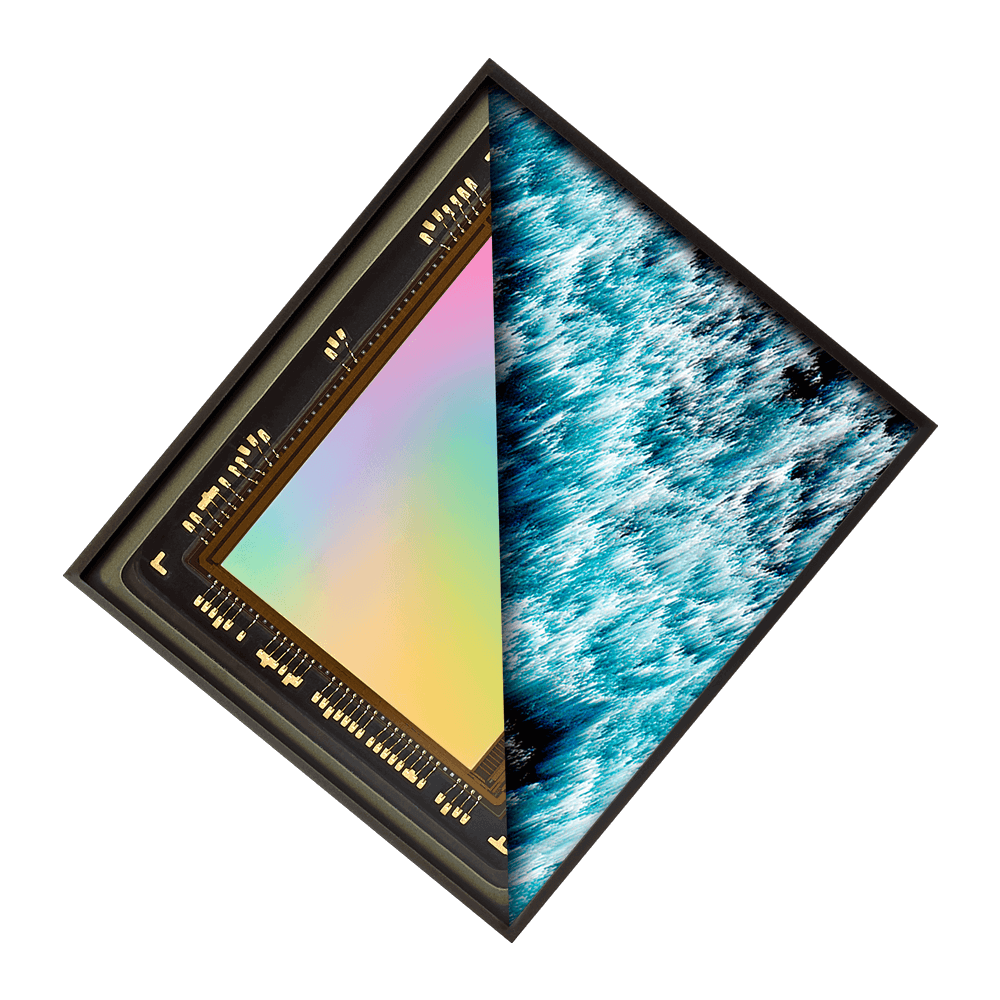

Prophesee focuses on how neuromorphic technology can be used for vision – basing its research and development on how the brain and eyes work together to allow us to see and process visual information in an optimal way. We call the approach Event-Based Vision and it is based on the fact that, like the human eye, machines can operate more effectively if they only process changes in a scene instead of processing everything in a scene all the time. The result is far better performance, less bandwidth required, and much improved energy efficiency. It also lends itself to enhanced operational characteristics in challenging lighting conditions.

This development has given rise to the event camera, a paradigm shift from the traditional (frame-based) camera. Event cameras can deliver new levels of performance and efficiency in a wide range of applications and use cases, from autonomous mobility, to ADAS applications, to industrial automation, AR/VR systems and mobile devices.

How event cameras work?

In a conventional camera an entire image (i.e. the light intensity at each pixel) is recorded at a pre-assigned interval, called the frame rate. While this works well in representing the ‘real world’ when displayed on a screen, recording the entire image every time increment oversamples all the parts of the image that have not changed. With frame-based cameras, this means that lots of unnecessary data is acquired, especially at high frame rates, whereas the most dynamic part of the image is under-sampled. The human eye (on which Prophesee’s technology is based) samples changes at up to 1000 times per second but does not record the primarily static background at such high frequencies.

Event cameras are a biologically inspired solution to this under and over-sampling. In an event camera, each pixel of the Event-Based Vision sensor works independently (i.e., asynchronously). Each pixel reports when it detects a relative change in the illumination intensity that is above or below a defined percentage of the previous intensity. An added benefit of this asynchronous approach is a much-enhanced dynamic range, since the pixel sensitivity does not have to be set globally in the event camera. As such, all the dynamic information is recorded but not the unnecessary static information such as background landscapes or sky.

This makes them extremely useful for scenes where this is motion – think: high-speed counting, or driver safety systems.

Giving camera developers a head start

Adapting a new approach can be challenging and Prophesee has a range of development aids to help machine vision experts implement this new method.

At the core of our solution is the event-based Metavision sensor available today in either 320x320px, VGA or HD resolution. It is the foundation on which an event camera can be built. The Metavision platform has available evaluation and development kits that can be used to experiment with design ideas and understand the operational characteristics of an event camera. Our Evaluation Kits come with complementary access to an advanced set of tools composed of a support portal, drivers, open-source platform, SDK, tutorials, reference data and much more.

In addition, the Metavision Intelligence Suite is a key enabler for developers of event cameras. The offering contains a range of development tools and ready-to-use modules that allow efficient implementation of machine vision systems, as well as customizations for specific application requirements. With this development environment and resources, Prophesee is helping expand the ecosystem around event cameras to make it easier to adopt and develop use-case specific systems that benefit from its advantages.

Realizing various applications

The applications included in the Metavision Intelligence Software Suite allow for major enhancements in industrial automation, IoT, mobile, automotive and healthcare. The suite also includes modules for Machine Learning, beginning with access to the extensive and real-sequence data set Prophesee has created over the past four years. Developers can use a variety of tools to guide the development of neural network models, run inference on event-based data for both supervised training tasks for object detection and self-supervised training for optical flow – all optimized for event cameras.

Build your own vision

OpenEB, a set of key open-source software modules are available through Github and allow designers to build custom plugins and ensure compatibility with the Metavision Intelligence Suite. The suite also features a set of new Event-Based Machine Learning solutions aimed at optimizing ML training and inference for event-based applications, including optical flow and object detection. Additionally, industry’s largest HD event-based dataset is offered to developers as a free download. Currently there are 3,440+* worldwide users of the software.

*As of March 4, 2022

Event cameras in action

Several camera makers are taking advantage of the Event-Based Vision approach. Industrial grade event cameras – SilkyEvCam by Century Arks and VisionCam by IMAGO technologies as well as Triton™ Factory Tough™ GigE Vision camera prototype leverage our event-based vision sensors and feature full native compatibility with Metavision® Intelligence software.

The Stacked Event-Based Vision Sensors Sony IMX 636/637, co-developed with Prophesee featuring the industry’s smallest 4.86 μm pixel size are also fully compatible with Metavision Intelligence Suite. Combining IMX 636/637 with this software will enable efficient application development and provide solutions for various use cases.